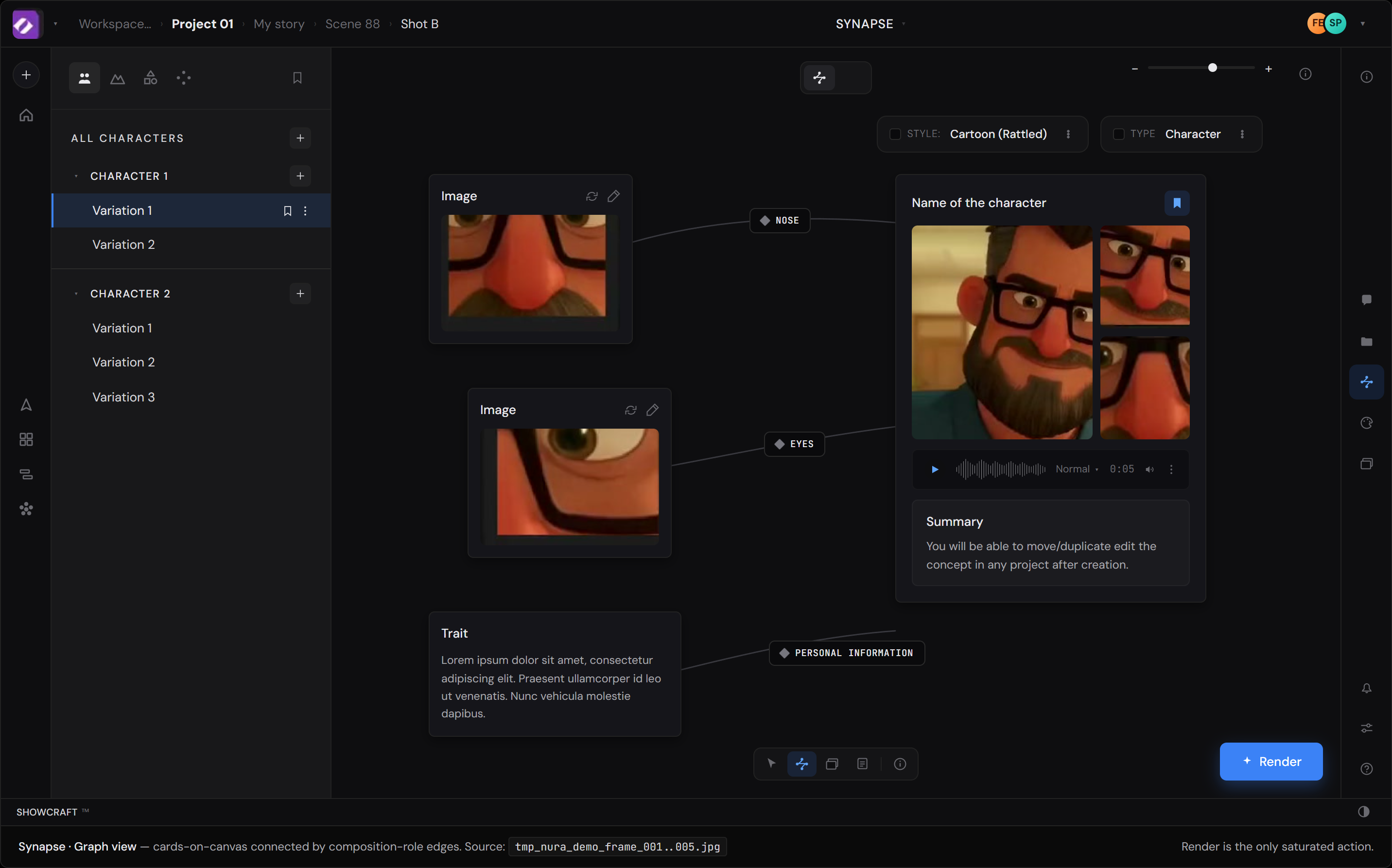

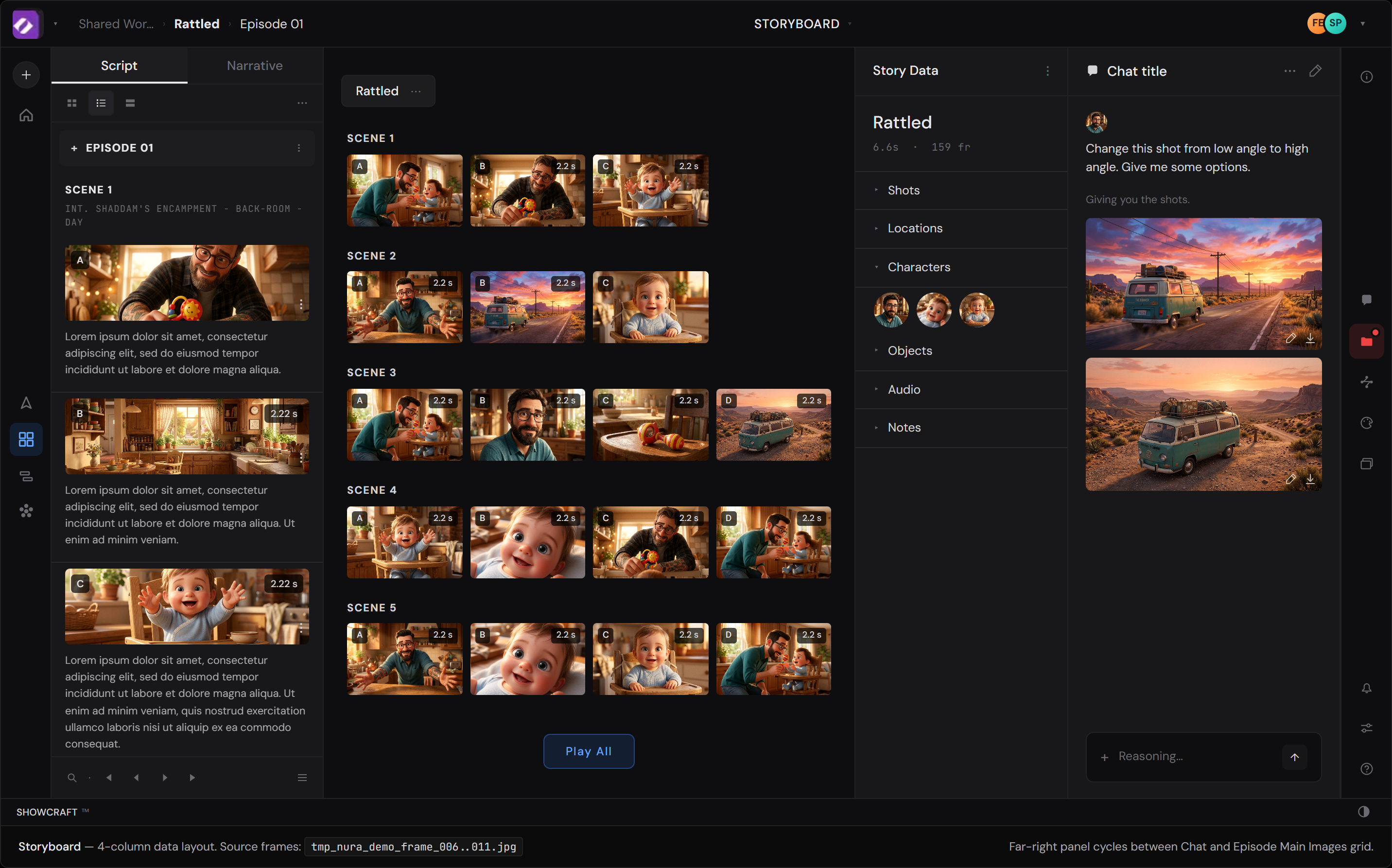

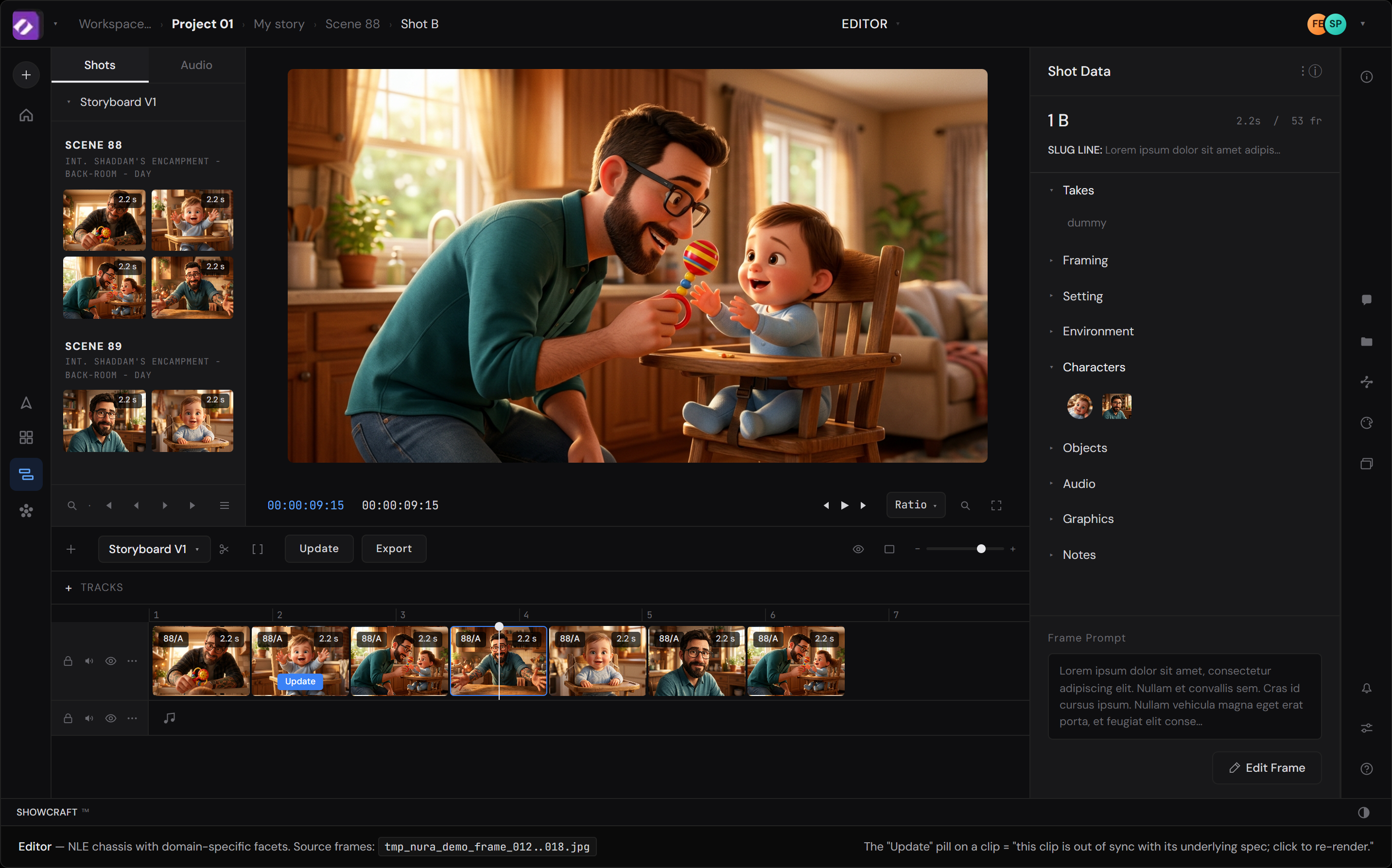

The app, rebuilt 1:1

Each Showcraft workspace reconstructed in HTML from the demo video, dense-sampled at 6fps to catch the pans. Open each in a new tab — they're standalone files that match the actual product chrome at high fidelity. The notes below reference these as the baseline; the act of rebuilding them is half of how the notes were found.

Files: mocks/synapse.html · mocks/storyboard.html · mocks/editor.html